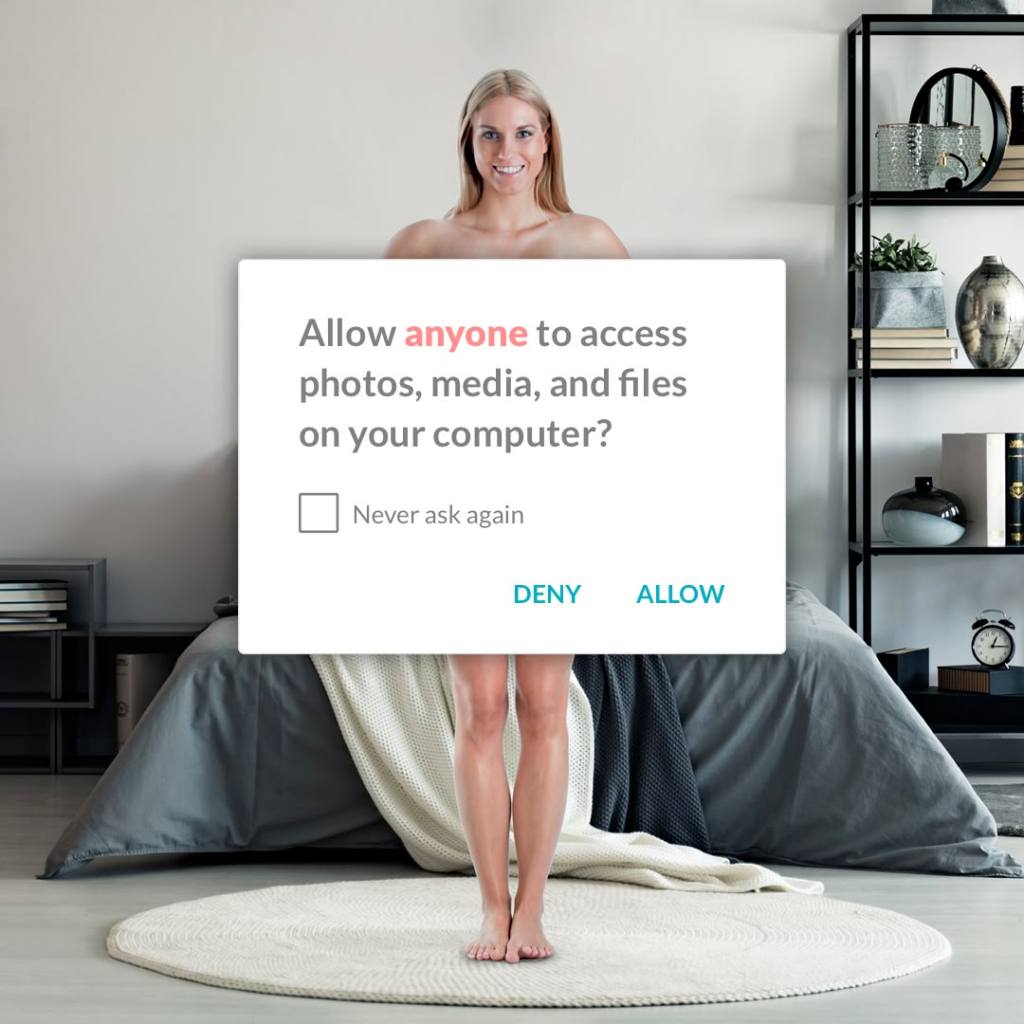

An application requests access: location, contacts, activity, behavioural data.

Consent is given, often without meaningful deliberation. Participation in digital life increasingly depends on acceptance.

This interaction reflects a deeper legal uncertainty.

Privacy remains a protected value. Yet its doctrinal structure is under strain.

The question is no longer simply whether privacy is protected, but what—precisely—the law is attempting to regulate.

From secrecy to control

Historically, privacy was associated with confidentiality and seclusion. It protected a private sphere insulated from intrusion.

Modern data protection law reframed this model. Under the General Data Protection Regulation (GDPR), privacy is structured around control over personal data. Article 5 establishes principles governing processing, while Articles 12–22 provide rights of access, rectification, erasure, and objection.

Processing must be grounded in a lawful basis under Article 6, with consent occupying a central role.

This framework assumes that individuals can meaningfully determine how their data is used.

That assumption is increasingly unstable.

The expansion of personal data

Article 4(1) GDPR defines personal data broadly as any information relating to an identified or identifiable person. This definition has proven adaptable, encompassing not only provided data but also observed behaviour.

Contemporary systems, however, generate a further category: inferred data.

Profiles are constructed through analytics. Predictions are derived from patterns. Individuals are categorised based on probabilistic assessments.

This shift is legally significant.

The GDPR is more effective at regulating the collection of data than the production of knowledge derived from it.

Profiling and its limits

Article 22 GDPR addresses automated decision-making, particularly where decisions produce legal or similarly significant effects. It establishes safeguards, including the right not to be subject to such decisions without meaningful human intervention.

Yet its scope is limited.

Many forms of profiling operate below this threshold. They influence, rather than determine, outcomes: what content is shown, which opportunities are prioritised, how risk is assessed.

In Schrems II (C-311/18), the Court of Justice of the European Union emphasised the importance of effective protection against data processing that undermines fundamental rights. However, the broader ecosystem of inference remains only partially regulated.

Individuals may therefore retain formal rights over input data, while lacking visibility over the conclusions drawn from it.

Consent and structural imbalance

Consent remains a central lawful basis under Article 6 GDPR. It must be freely given, specific, informed, and unambiguous.

In practice, these conditions are difficult to satisfy.

Information asymmetries persist. Data practices are complex and opaque. Refusal may entail exclusion from essential services. The imbalance of power between individuals and large platforms raises questions about whether consent can genuinely function as an expression of autonomy.

The result is a divergence between formal legality and substantive control.

The relational dimension of privacy

Privacy law is structured around individual rights. Contemporary data practices are not.

Data is inherently relational. Genetic data implicates families. Communication data involves multiple parties. Aggregated datasets produce group-level inferences that affect individuals indirectly.

This creates a conceptual tension.

Legal rights are individual. The harms they address are increasingly collective.

The existing framework captures this dynamic only partially.

Temporal mismatch and continuous processing

Data protection law is organised around discrete stages: collection, processing, storage.

Modern systems operate continuously. Data is updated in real time. Models evolve dynamically. Inferences change over time.

Legal interventions, however, remain tied to specific moments: the point of collection, the giving of consent, the initiation of processing.

This creates a temporal mismatch between regulatory structure and technological reality.

Privacy as a fundamental right

Despite these tensions, privacy remains firmly embedded in European legal order.

Article 7 of the Charter of Fundamental Rights of the European Union protects respect for private life. Article 8 establishes a distinct right to the protection of personal data. Article 8 of the European Convention on Human Rights provides parallel protection at the international level.

In Digital Rights Ireland and subsequent case law, the Court of Justice has emphasised that limitations on these rights must satisfy strict proportionality requirements under Article 52(1) of the Charter.

Privacy, therefore, retains constitutional status. What is changing is its operational meaning.

From control to inference

Privacy can no longer be understood solely as secrecy, nor adequately as control.

It increasingly concerns the conditions under which individuals are observed, interpreted, and acted upon.

The central regulatory challenge is not only access to data, but the production of knowledge from it.

Who may infer? On what basis? With what consequences?

These questions are only partially addressed within existing doctrine.

Conclusion

Privacy in 2026 has not diminished. It has evolved.

The law continues to provide rights, obligations, and safeguards. It imposes limits and enables redress.

But it operates within a technological environment that has outpaced the assumptions on which it was built.

The shift is conceptual.

Privacy is no longer primarily about protecting information from access. It is about regulating how information is transformed into knowledge and used to shape outcomes.

The challenge for law is not simply to extend existing protections.

It is to determine whether its current structure is adequate for a world in which the most significant privacy risks arise not from what is known—but from what can be inferred.

Leave a comment